If I checked the scheduler status with /heath API getting unhealthy for the schedulerĪttached airflow.cfg file for the referenceīeta Was this translation helpful? Give feedback.

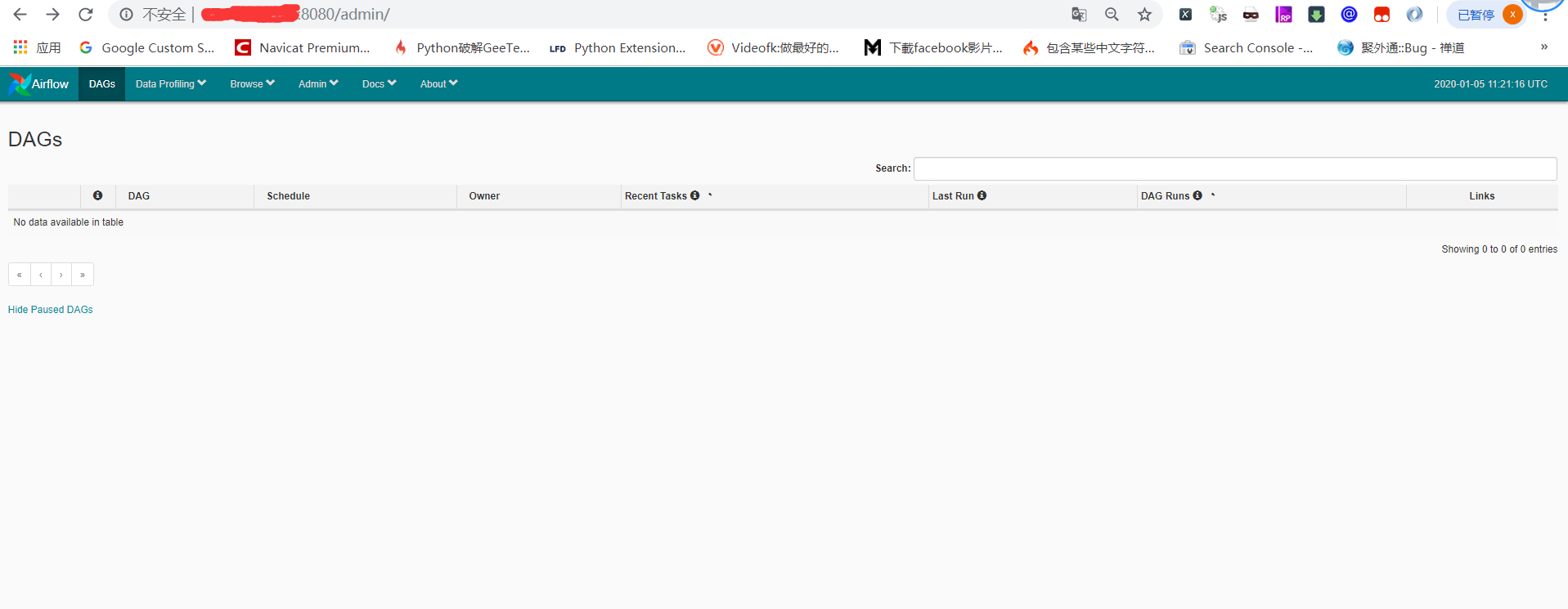

This problem occurs when not dags are running for a while and getting The scheduler does not appear to be running error The DAGs list may not update, and new tasks will not be scheduled" errorģ. The last heartbeat was received 2 days ago. In the new menu, we click the ‘Delete’ command. On this page, we should find the DAG runs that don’t want to run, select them, and click the ‘With selected’ menu option. In the menu, click the ‘Browse tab, and open the ‘DAG Runs’ view. The scheduler should work properly in order to execute the dags which will take around 3 to 5 hrs to completeĮven though no DAGs are running we getting "The scheduler does not appear to be running. 1 airflow clear -s -e dagid When that is not enough, we need to use the Airflow UI.

After the push task has finished executing, we have no more tasks left to. Here are some of the common causes: Does your script compile, can the Airflow engine parse it and find your DAG object To test this, you can run airflow dags list and confirm that your DAG shows up in the list. If I restart the scheduler it was working fine for some hours but again getting the same above mentioned issue Defining pipelines flexibly in (Python) code 10 Scheduling and executing. There are very many reasons why your task might not be getting scheduled. So that when I trigger my DAG it was stuck in the in-progress state for a long time and none of the tasks of DAGs are running Increase parameters related to DAG parsing: dag-file-processor-timeout to at least 180 seconds (or more, if required). The DAGs list may not update, and new tasks will not be scheduled │ scheduler scheduler does not appear to be running. │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 1356, in _find_zombies │ │ scheduler return func(*args, session=session, **kwargs) │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/utils/session.py", line 71, in wrapper │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/utils/event_scheduler.py", line 36, in repeat │ The caregiver or the medical personnel presses the start button, and the device will collect and log the measurements such as blood pressure, heartbeat, etc. The DAGs list may not update, and new tasks will not be scheduled. │ scheduler action(*argument, **kwargs) │ Logging Details Message to Address Scheduler Heartbeat Loss and Downtime Challenges area:core kind:feature type:improvement. │ scheduler File "/usr/local/lib/python3.7/sched.py", line 151, in run │ Airflow tasks went disappear after airflow db clean area:CLI area:core kind:bug needs-triage pending-response. │ scheduler next_event = n(blocking=False) │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 836, in _run_scheduler_loop │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 736, in _execute │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/jobs/base_job.py", line 244, in run │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/cli/commands/scheduler_command.py", line 46, in _run_schedule │ │ scheduler _run_scheduler_job(args=args) │

│ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/cli/commands/scheduler_command.py", line 75, in scheduler │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/utils/cli.py", line 99, in wrapper │ │ scheduler return func(*args, **kwargs) │ │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/cli/cli_parser.py", line 51, in command │ Try running the scheduler as a daemon process with this command. │ scheduler File "/home/airflow/.local/lib/python3.7/site-packages/airflow/_main_.py", line 38, in main │ 1 Could be because you are running the scheduler command/process in fg and closing the session. What does this error mean? I have a simple airflow setup where I ran the airflow helm chart on a local kind/kubernetes cluster with the CeleryKubernetesExecutor │ scheduler WARNING - Failed to get task ' │

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed